Between 2014 and 2016, a series of projects explored how algorithmic structures could create filmic order without predefining meaning — from public API experiments to collective generative systems shown at Glasgow, Brno, and the Shenzhen Biennale.

Research · Generative Systems · Installation

My Role

Between 2014 and 2016, we explored how algorithmic structures could create filmic order without predefining meaning. The work began as a studio experiment and evolved into a set of collective systems under the Design Displacement Group (DDG). Each project extended the same question: how far can algorithmic organisation replace direct artistic control while still producing coherent cinematic experience?

1 — Item to Item

The first experiments tested whether public APIs could drive filmic sequence. We treated services like Amazon and YouTube as narrative engines rather than data sources. Scripts followed their recommendation paths, pulling stills and clips to form associative chains — one item leading to the next. The result was less a film than a moving diagram of networked taste. It revealed how algorithmic logic already performs montage at scale. That architecture — access → traversal → assembly → output — became the foundation for later systems.

Early experiments with API-driven narratives — Dribbble, YouTube, Amazon, Getty Images. Each API returned a different visual grammar.

Detail view of a YouTube API traversal. Extracted keywords steer the next query, building an associative chain across videos.

Filling the cards with content that depends on each other — one API's output triggers the next query. Maps, product images, and text fragments side by side.

Algorithmically generated "characters" — 3D collages assembled from recommendation chains across ArtRank, Capture, and others. No manual composition, only structural rules applied to found material.

Installation view, cleaned up. Same space, cables and clutter removed.

2 — Experiments in data-driven narratives

The early chain-traversals revealed algorithmic logic but stayed inside a single platform’s associative grammar — one item leading to the next within the same recommendation graph. Two shorter experiments tested whether narrative could emerge from cross-domain mapping instead: forcing unrelated datasets into shared time. “Be the First Person to Like This” built word chains between YouTube videos with zero views, linking them through title or description overlaps — still accumulative, but selecting for obscurity rather than relevance. “Out in the Open” took the larger step, mapping live stock price movements onto animal footage. Here, the system no longer followed lateral associations. It produced meaning through disjunction — the gap between finance data and nature imagery became the narrative itself. That move — coherence through structural juxtaposition rather than semantic continuity — prefigured the core logic of No Exit.

Be the First Person to Like This — built a word chain from two YouTube videos with zero views but similarities in title or description.

Out in the Open — illustrated live stock price changes with animal footage.

3 — No Exit (Design Displacement Group)

When the Design Displacement Group formed, the focus shifted from revealing relations to obscuring them. The project No Exit reused the early orchestration logic but replaced public data with material from group members. Footage, text, and sound files were uploaded to a shared Dropbox, each placed into folders named after the five acts of a classical opera: Exposition, Rising Action, Climax, Falling Action, and Resolution. Contributors never saw how their files would connect; the algorithm decided that.

The system built new sequences by combining files across these acts, erasing stylistic continuity and authorship. What held the film together was timing and metadata, not narrative intent. The software was deliberately simple — written in JavaScript / Node, using lightweight HTML playback — but could run for days without restart. Each screening used a different dataset, creating a new cut every time. Versions of No Exit were shown in Glasgow, Brno, and other venues, always under changing conditions. The algorithm stayed constant; the material kept evolving.

No Exit — three-channel projection, Graphic Design Festival Scotland, Glasgow. Aerial cityscapes, scraped libretto subtitles, and found footage. Each screen runs independently, synchronised only by structural timing.

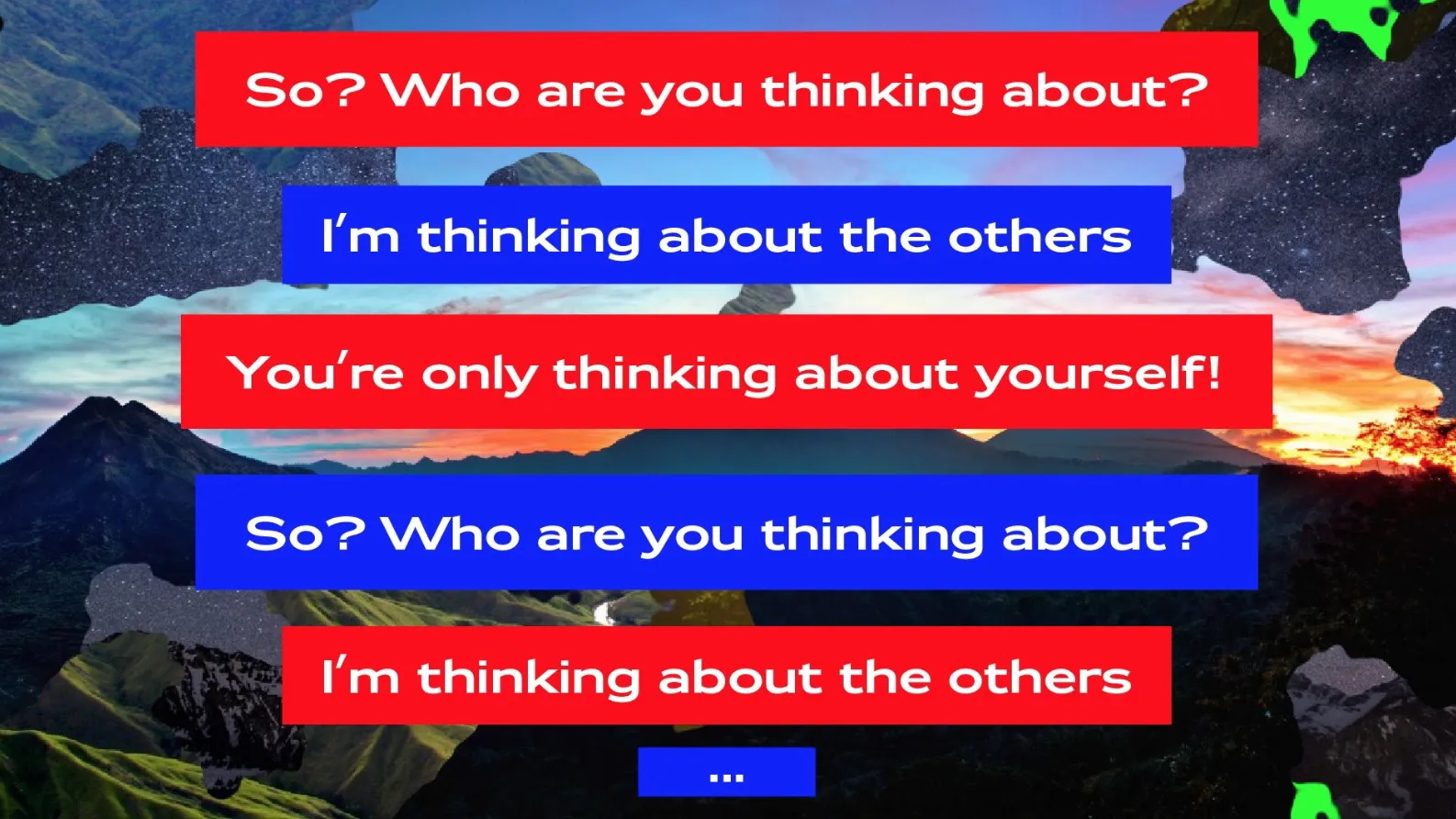

Divider screen — "RESURRECTION, part 5." Generated title cards break the continuous feed into acts, giving the film a rhythm the algorithm doesn't otherwise provide.

Dialogue screens. Scraped libretto fragments layered over collaged landscapes: "So? Who are you thinking about?" — "I'm thinking about the others."

4 — Workshop System (DDG)

A later version translated the same framework into a teaching system. Students produced short clips and uploaded them into the same opera-based folder structure. Once the files were in place, control shifted entirely to the algorithm. Participants could watch how the system recombined their work but had no influence on the outcome. The goal was not collaboration but observation: to see how unrelated fragments could form something resembling narrative simply through structural rhythm. The engine ran locally, without network dependencies, to remain robust in classroom conditions.

Workshop interface. Topic filters — History, Camouflage, Propaganda, Provocation, Currencies — and quantity sliders let participants shape the input without controlling the output.

5 — Rise & Shine News Channel (Shenzhen Biennale)

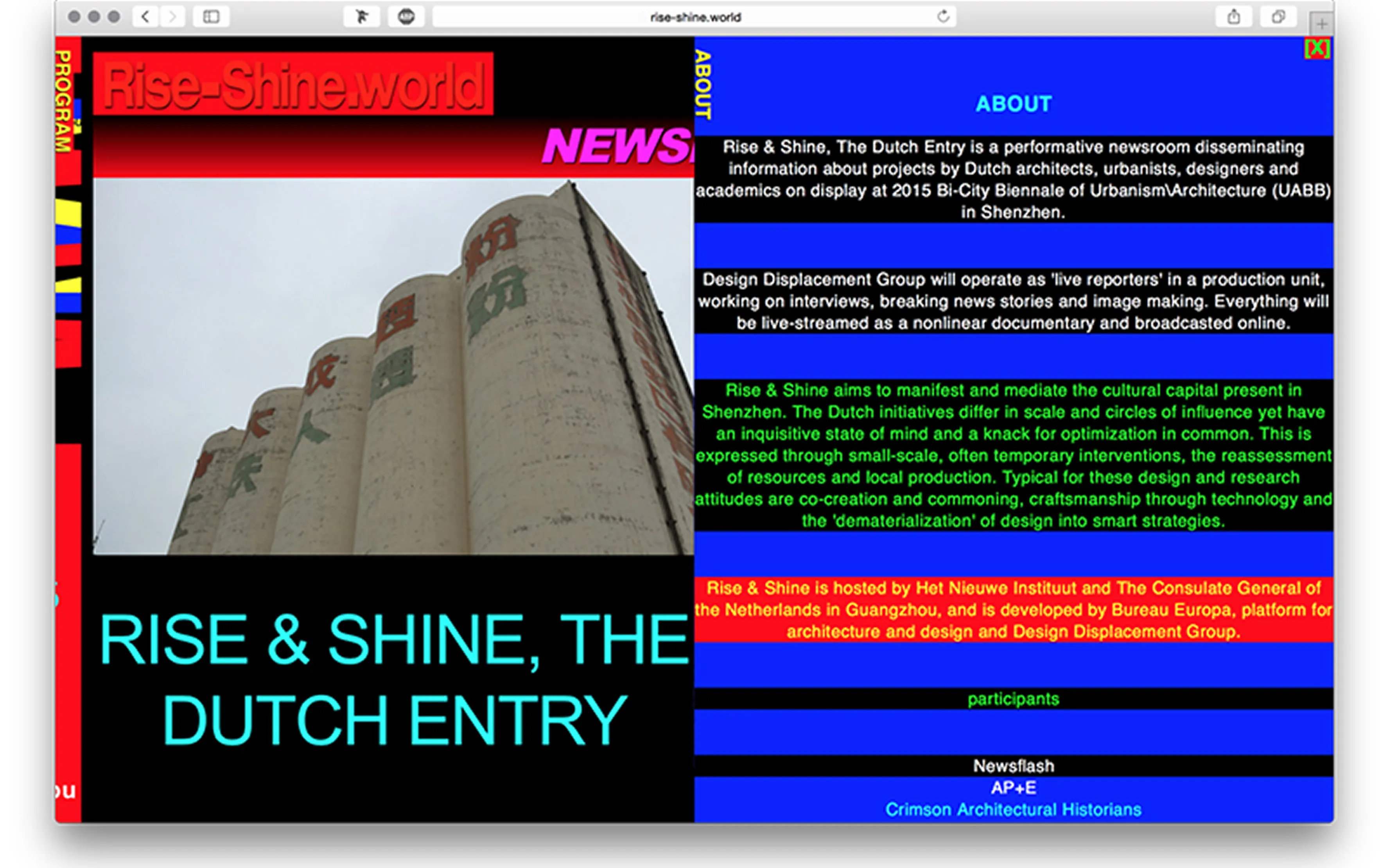

For the Shenzhen Biennale, we reconfigured the framework into a live editorial system called Rise & Shine. Artists and designers acting as “reporters” uploaded short clips about the exhibited works. The algorithm selected, sequenced, and reassembled these fragments into a continuous newsfeed projected in the exhibition. The installation restarted automatically each morning and required no manual intervention. Like No Exit, it blurred the boundary between contribution and authorship: content entered through human choice, structure through code.

Rise & Shine News Channel — the website running at the Bi-City Biennale of Urbanism/Architecture, Shenzhen, 2015. A performative newsroom where DDG members acted as live reporters.

Process and Principles

All iterations shared a few consistent traits. First, minimal code, maximal recombination — fewer rules made the system more flexible; behaviour emerged from interaction, not scripting. Second, automated continuity — whether in a film, workshop, or exhibition, the system maintained itself, restarting, reassembling, regenerating without manual repair. Third, structural opacity — relationships between clips were intentionally hidden, encouraging interpretation rather than explanation.

These constraints made the framework adaptable to different contexts without redesign. It could perform as artwork, teaching tool, or curatorial device simply by changing its input source.

Across four projects, the work evolved from curiosity about algorithmic montage into a method for producing open narrative systems. Each step reduced authorship and increased structural autonomy. Instead of designing images, we designed conditions for images to relate. The code still runs. The method — input, parameters, output — turned out to be more durable than any single project that used it.